Whisper: Web-Scale Supervised Pretraining for Speech Recognition

Robust Speech Recognition via Large-Scale Weak Supervision

Takeaways

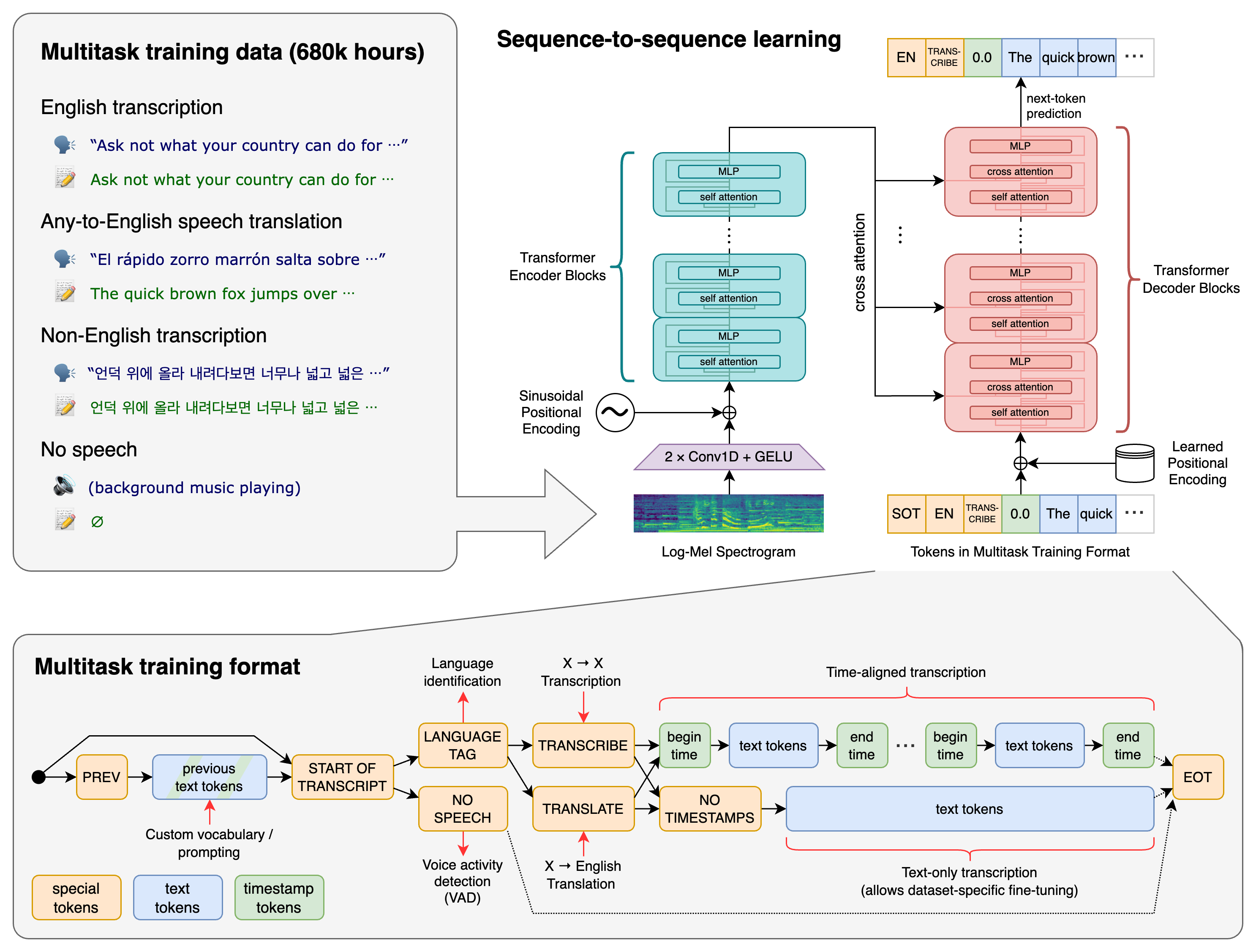

- Whisper is a multi-lingual and multi-task speech processing pipeline based on the encoder-decoder Transformer.

- Whisper is trained on 680,000 hours of labeled audio data from the Internet (weakly supervised) to predict the transcripts of the audio.

- Whisper generalizes well to standard benchmarks and is often competitive with prior fully supervised results but in a zero-shot transfer setting.

Introduction

Previous work on unsupervised pre-training

- e.g., wav2vec 2.0 and BigSSL.

- Learn directly from raw audio without labels.

- Scaled up to 1,000,000 hours of training data.

- Learn high-quality representations of speech.

- Lack of an equivalently performant decoder mapping those representations to usable outputs, necessitating a finetuning stage in order to actually perform a task.

- Risk of fine-tuning: overfit to spurious patterns and don’t generalize to other datasets.

Previous work on supervised pre-training

- Pre-training across many datasets/domains in a supervised fashion.

- Higher robustness and better generalizability.

- Only a moderate amount of this data is easily available (thousands of hours).

Dataset for weakly supervised learning

- Trade-off between quality and quantity: create larger datasets for speech recognition by relaxing the requirement of gold-standard human-validated transcripts

- These new datasets are only a few times larger than the sum of existing high-quality datasets and still much smaller than prior unsupervised work.

This work

- Study the capabilities of speech processing systems trained simply to predict large amounts of transcripts of audio on the Internet.

- Scale weakly supervised speech recognition the next order of magnitude to 680,000 hours of labeled audio data.

- Remove the need for any dataset-specific fine-tuning to achieve high quality.

- Broaden the scope of weakly supervised pre-training beyond English-only speech recognition to be both multilingual and multitask.

- The resulting models (Whisper) generalize well to standard benchmarks and are often competitive with prior fully supervised results but in a zero-shot transfer setting.

Methods

Overview of Whisper.

Dataset

A minimalist approach to data pre-processing:

- Train models to predict the raw text of transcripts.

- No significant standardization

- Rely on the expressiveness of seq-to-seq models to learn to map between utterances and their transcribed form.

- Simplify the pipeline since it removes the need for a separate inverse text normalization step.

Construct a very diverse dataset from the audio that is paired with transcripts on the Internet. While diversity in audio quality can help train a model to be robust, diversity in transcript quality is not similarly beneficial.

Filtering for transcript:

- Remove machine-generated transcripts from the training dataset.

- Remove the (audio, transcript) pair if the spoken language doesn’t match the language of the transcript. Exception: if the transcript language is English, add these pairs to the dataset as

X -> enspeech translation training examples instead. - Fuzzy de-duping of transcript texts to reduce the amount of duplication and automatically generated content in the training dataset.

- Break audio files into 30-second segments paired with the subset of the transcript that occurs within that time segment.

- Train on all audio, including segments where there is no speech, and use these segments as training data for voice activity detection.

- After training an initial model, aggregated information about its error rate on training data sources and performed a manual inspection of these data sources sorting by a combination of both high error rate and data source size in order to identify and remove low-quality ones efficiently.

- To avoid contamination, perform de-duplication at a transcript level between the training dataset and the evaluation datasets.

Model

Input Audio

- All audio is re-sampled to 16,000 Hz.

- An 80-channel log magnitude Mel spectrogram representation is computed on 25-millisecond windows with a stride of 10 milliseconds.

- Globally scale the input to be between -1 and 1 with approximately zero mean across the pre-training dataset.

Network

- Use off-the-shelf architecture: encoder-decoder Transformer

- The encoder processes this input representation with a small stem consisting of two convolution layers with a filter width of 3 and the GELU activation function, where the second convolution layer has a stride of two.

- Sinusoidal position embeddings are then added to the output of the stem after which the encoder Transformer blocks are applied

- Uses pre-activation residual blocks

- A final layer normalization is applied to the encoder output

- The decoder uses learned position embeddings and tied input-output token representations

- Number of parameters for Whisper-Large: 1550M

Vocabulary

- Use the same byte-level BPE text tokenizer used in GPT2 for the English only models.

- Refit the vocabulary (but keep the same size) for the multilingual models to avoid excessive fragmentation on other languages.

Multitask Format

In addition to predicting which words were spoken in a given audio snippet, A fully featured speech recognition system can involve many additional components, e.g., voice activity detection, speaker diarization (i.e., the process of partitioning an input audio stream into homogeneous segments according to the speaker identity), and inverse text normalization.

To reduce this complexity, this work uses a single model to perform the entire speech processing pipeline and uses a simple format to specify all tasks and conditioning information as a sequence of input tokens to the decoder.

Since the decoder is an audio-conditional language model, the authors also train it to condition on the history of the text of the transcript in the hope that it will learn to use longer-range text context to resolve ambiguous audio. Specifically, with some probability, the transcript text preceding the current audio segment is added to the decoder’s context.

- Indicate the beginning of the prediction with a

<|startoftranscript|>token. - First, predict the language being spoken which is represented by a unique token for each language in our training set (99 total). If there is no speech in an audio segment, the model is trained to predict a

<|nospeech|>token. - The next token specifies the task (either transcription or translation) with an

<|transcribe|>or<|translate|>token. - After this, specify whether to predict timestamps or not by including a

<|notimestamps|>token for that case. - At this point, the task and desired format are fully specified, and the output begins.

- Timestamp prediction:

- Predict time relative to the current audio segment, quantizing all times to the nearest 20 milliseconds which matches the native time resolution of Whisper models, and add additional tokens to our vocabulary for each of these.

- Interleave timestamp prediction with the caption tokens: the start time token is predicted before each caption’s text, and the end time token is predicted after.

- When a final transcript segment is only partially included in the current 30-second audio chunk, we predict only its start time token for the segment when in timestamp mode, to indicate that the subsequent decoding should be performed on an audio window aligned with that time, otherwise we truncate the audio to not include the segment.

- Lastly, add a

<|endoftranscript|>token. - Only mask out the training loss over the previous context text, and train the model to predict all other tokens.

Experiments

Evaluation

The goal of Whisper is to develop a single robust speech processing system that works reliably without the need for dataset-specific fine-tuning to achieve high-quality results on specific distributions.

Evaluate Whisper in a zero-shot setting without using any of the training data for each of the datasets to measure broad generalization.

Metrics: word error rate (WER)

- Sensitive to minor formatting differences.

- Apply extensive standardization of text before the WER calculation to minimize penalization of non-semantic differences.

English Speech Recognition

For the previous SOTA supervised methods, there is a gap between reportedly superhuman performance in-distribution and subhuman performance out-of-distribution.

This might be due to conflating different capabilities being measured by human and machine performance on a test set.

- Humans are often asked to perform a task given little to no supervision on the specific data distribution. Thus human performance is a measure of out-of-distribution generalization.

- But machine learning models are usually evaluated after training on a large amount of supervision from the evaluation distribution, meaning that machine performance is instead a measure of in-distribution generalization.

Results:

- Although the best zero-shot Whisper model has a relatively unremarkable LibriSpeech clean-test WER of 2.5, which is roughly the performance of a modern supervised baseline or the mid-2019 SOTA,

- zero-shot Whisper models have very different robustness properties than supervised LibriSpeech models and outperform all benchmarked LibriSpeech models by large amounts on other datasets.

- Zero-shot Whisper models close the gap to human robustness: The best zero-shot Whisper models roughly match human accuracy and robustness.

Multi-lingual Speech Recognition

Low-data Benchmarks

Whisper performs well on Multilingual LibriSpeech. However, On VoxPopuli, Whisper significantly underperforms prior work.

Relationship between Size of Dataset and Performance

- Studied the relationship between the amount of training data for a given language and the resulting downstream zero-shot performance for that language.

- Found a strong squared correlation coefficient of 0.83 between the log of the word error rate and the log of the amount of training data per language.

- Many of the largest outliers in terms of worse-than-expected performance according to this trend are languages that have unique scripts and are more distantly related to the Indo-European languages. These differences could be due to a lack of transfer due to linguistic distance, the byte-level BPE tokenizer being a poor match for these languages or variations in data quality.

Translation

Study the translation capabilities of Whisper by measuring the performance on the X -> en translation.

Results:

- Achieved a new SOTA of 29.1 BLEU zero-shot without using any of the CoVoST2 training data. These results may be attributed to the 68,000 hours of

X -> entranslation data for these languages in our pre-training dataset which, although noisy, is vastly larger than the 861 hours of training data forX -> entranslation in CoVoST2. - Since Whisper evaluation is zero-shot, it does particularly well on the lowest resource grouping of CoVoST2, improving over mSLAM by 6.7 BLEU. Conversely, the best Whisper model does not actually improve over Maestro and mSLAM on average for the highest resource languages.

Language Identification

The zero-shot performance of Whisper is not competitive with prior supervised work here and underperforms the supervised SOTA by 13.6%.

Robustness to Additive Noise

- There are many models that outperform the zero-shot Whisper performance under low noise (40 dB SNR), which is unsurprising given those models are trained primarily on LibriSpeech,

- but all models quickly degrade as the noise becomes more intensive, performing worse than the Whisper model under additive pub noise of SNR below 10 dB.

- This showcases Whisper’s robustness to noise, especially under more natural distribution shifts like the pub noise.

Long-form Transcription

- Whisper models are trained on 30-second audio chunks and cannot consume longer audio inputs at once.

- The authors developed a strategy to perform buffered transcription of long audio by consecutively transcribing 30-second segments of audio and shifting the window according to the timestamps predicted by the model.

- It is crucial to have beam search and temperature scheduling based on the repetitiveness and the log probability of the model predictions in order to reliably transcribe long audio.

Results: Whisper is competitive with state-of-the-art commercial and open-source ASR systems in long-form transcription.

Comparison with Human Performance

These results indicate that Whisper’s English ASR performance is not perfect but very close to human-level accuracy.

Ablations

Model Scaling

Concerns with using a large but noisy dataset:

- Although it may look promising to begin with, the performance of models trained on this kind of data may saturate at the inherent quality level of the dataset.

- As capacity and compute spent training on the dataset increases, models may learn to exploit the idiosyncrasies of the dataset, and their ability to generalize robustly to out-of-distribution data could even degrade.

Study the zero-shot generalization of Whisper models as a function of the model size.

- With the exception of English speech recognition, performance continues to increase with model size across multilingual speech recognition, speech translation, and language identification.

- The diminishing returns for English speech recognition could be due to saturation effects from approaching human-level performance.

Dataset Scaling

To study how important is the raw dataset size to Whisper’s performance

Results:

- All increases in the dataset size result in improved performance on all tasks

- The general trend across tasks of diminishing returns when moving from 54,000 hours to the full dataset size of 680,000 hours could suggest that

- the current best Whisper models are under-trained relative to dataset size and performance could be further improved by a combination of longer training and larger models.

- we are nearing the end of performance improvements from dataset size scaling for speech recognition.

- Further analysis is needed to characterize “scaling laws” for speech recognition in order to decide between these explanations.

Multitask and Multilingual Transfer

A potential concern with jointly training a single model on many tasks and languages is the possibility of negative transfer where interference between the learning of several tasks results in performance worse than would be achieved by training on only a single task or language.

Compare the performance of models trained on just English speech recognition with the standard multitask and multilingual training setup.

Results:

- For small models trained with moderate amounts of compute, there is indeed negative transfer between tasks and languages: joint models underperform English-only models trained for the same amount of compute.

- Multitask and multi-lingual models scale better and for the largest experiments outperform their English-only counterparts demonstrating positive transfer from other tasks.

Text Normalization

There is a risk of overfitted text normalization.

Compare the performance of Whisper using the proposed normalizer versus an independently developed one from another project.

Results:

- On most datasets, the two normalizers perform similarly.

- On some datasets, the proposed normalizer reduces the WER of Whisper significantly more. The differences in reduction can be traced down to different formats used by the ground truth and how the two normalizers are penalizing them.

Strategies for Reliable Long-form Transcription

Transcribing long-form audio using Whisper relies on accurate prediction of the timestamp tokens to determine the amount to shift the model’s 30-second audio context window by, and inaccurate transcription in one window may negatively impact transcription in the subsequent windows.

Strategies to avoid failure cases of long-form transcription:

- Beam search: Use beam search with 5 beams using the log probability as the score function, to reduce repetition looping which happens more frequently in greedy decoding.

- Temperature fallback: The temperature starts with 0, i.e. always selecting the tokens with the highest probability, and increases by 0.2 up to 1.0 when either the average log probability over the generated tokens is lower than −1 or the generated text has a gzip compression rate higher than 2.4.

- Previous text conditioning: Providing the transcribed text from the preceding window as previous-text conditioning when the applied temperature is below 0.5 further improves the performance.

-

Voice activity detection: The probability of the

<|nospeech|>token alone is not sufficient to distinguish a segment with no speech, but combining the no-speech probability threshold of 0.6 and the average log-probability threshold of −1 makes the voice activity detection of Whisper more reliable. - Initial timestamp constraint: To avoid a failure mode where the model ignores the first few words in the input, the authors constrained the initial timestamp token to be between 0.0 and 1.0 second.

Limitations

Improved Decoding Strategies

Perception-related errors:

- e.g., confusing similar-sounding words.

- Larger models have made steady and reliable progress on reducing perception-related errors.

Non-perceptual errors:

- e.g., getting stuck in repeat loops, not transcribing the first or last few words of an audio segment, or outputting a transcript entirely unrelated to the actual audio.

- More stubborn in nature.

- They are a combination of failure modes of seq2seq models, language models, and text-audio alignment.

- Potential solutions:

- Fine-tuning on a high-quality supervised dataset

- Using reinforcement learning to more directly optimize for decoding performance.

Increase Training Data For Lower-Resource Languages

Whisper’s speech recognition performance is still quite poor on many languages.

The pre-training dataset is currently very English-heavy due to biases of the data collection pipeline.

A targeted effort at increasing the amount of data for these rarer languages could result in a large improvement to average speech recognition performance even with only a small increase in the overall training dataset size.

Studying Fine-Tuning

This work focused on the robustness properties of speech processing systems and as a result only studied the zero-shot transfer.

It is likely that results can be improved further by fine-tuning.

Studying the Impact of Language Models on Robustness

The authors suspect that Whisper’s robustness is partially due to its strong decoder, which is an audio-conditional LM.

It’s currently unclear to what degree the benefits of Whisper stem from training its encoder, decoder, or both.

Potential Experiments:

- Train a decoder-less CTC model.

- Study how the performance of existing speech recognition encoders change when used together with a language model.

Adding Auxiliary Training Objectives

Whisper departs noticeably from the most recent SOTA speech recognition systems due to the lack of unsupervised pre-training or self-teaching methods. It is possible that the results could be further improved by incorporating this.